Anthropic claims that Claude Opus 4.7, is a step-up on all counts for their model, but the jump in visual reasoning i.e. how well AI 'sees' is huge - from 69.1% to 82.1%*. What does that actually mean though? To find out, I thought I’d run a little 'kid-friendly' Friday experiment for fun…

The Experiment I have two children - let's call them Dash and Pixel (not their real names) . Like many parents, we sometimes measure our kids' height by having them stand next to the bathroom cupboard door, sticking a book on their head, then drawing a little line with their name on the door. They then scrawl the date and their name next to the line. We don’t usually measure their height - we're more interested in the relative changes - although we had actually measured two of the markings with a tape measure.

Messy height markings and dates on the cupboard door (real names blurred) The result is a door covered in handwritten names, messy lines and a couple of ‘anchor’ measurements that our kids love looking at. A great physical memory of their childhood that will stay with us for as long as the door does.

But what would Claude Opus 4.7 make of it? Could it make any sense of the markings, and use them to tell the full story.

The Friday Test More specifically, from a door that had 30 scrawls (14 for 'Dash', 16 for 'Pixel'), and only 2 anchor measurements, I wanted to see if Claude could:

Read the handwritten text (bearing in mind that many of the scrawls were written by a toddler)? Make sense of what it read? I.e. separate out the readings for each kid? Pick out the 2 marks on the door that did have real measurements i.e. 'the anchors’? Infer what the height values of the other markings should be based on those anchors (taking in to account perspective). Plot them all on a chart in a sensible, visually appealing way? I used a very simple prompt, without overthinking it:

This is chart of my children's height - their names are Dash and Pixel. On some of the measurements, you'll see the height in cm. On others you won't. By using these measurements can you infer all of the heights. Initially I'd like you to extract them all in a table, then I'd like you to redraw the image with the heights and values.

How did Claude Opus 4.7 fare? With a single one-shot short prompt, and a single photo (above) as the input, this is how it performed:

Reading - 100% of the height values correct, 100% of the names correct, 97% of the dates correct. It also accurately picked out the anchor measurements. Inferring heights - after the experiment, I measured each mark accurately (see image). It was never more than 2cm out, and usually within 1cm 🤯 Plotting on a chart - well I'll let you decide that... Have a look at the chart in the next section. Claude Opus 4.7 height output

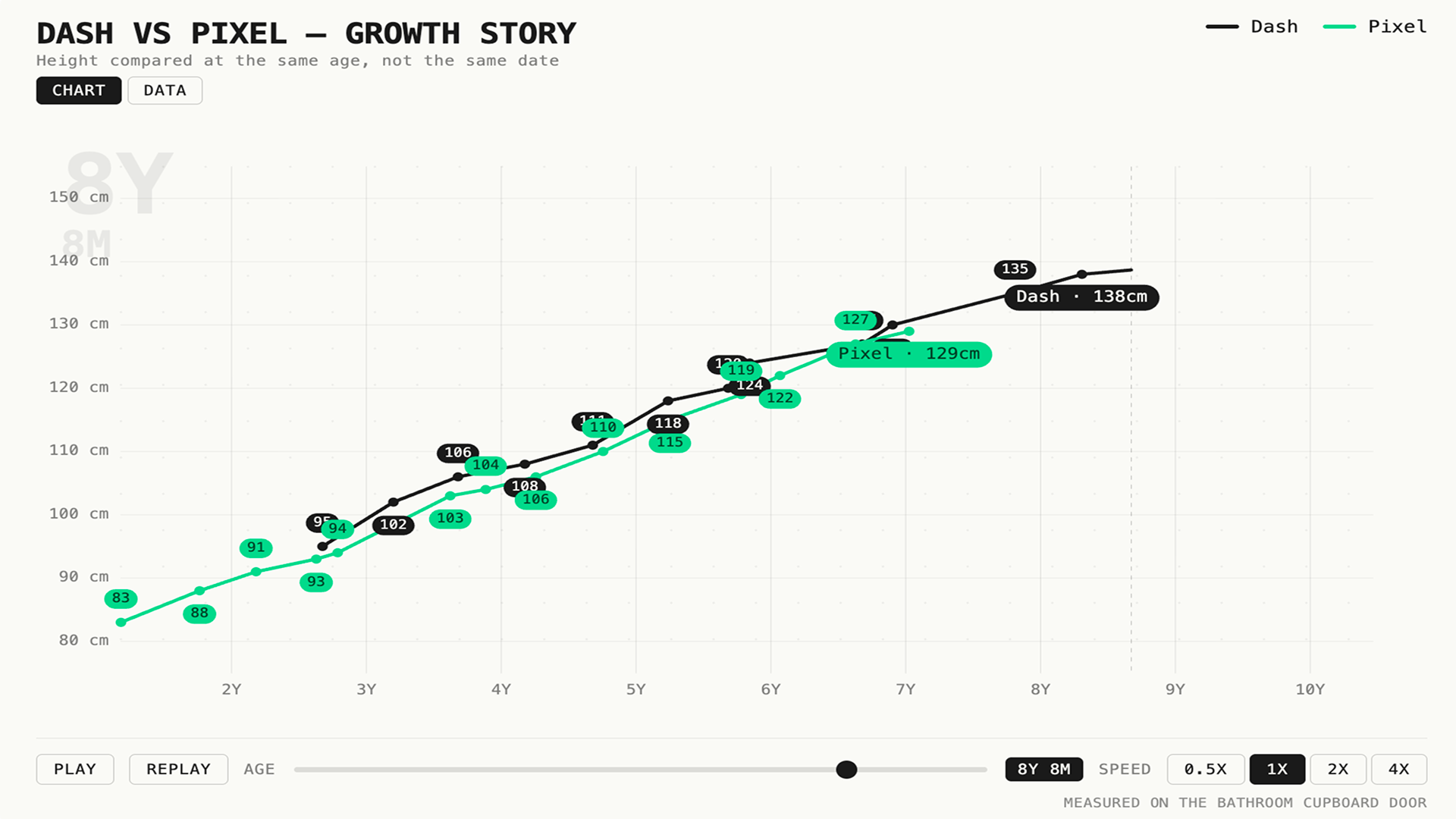

Telling the story Now that it had done the hard bit by inferring the data correctly, which I had validated, it was time to tell the story through visualisation. To do this, and as I was in a rush, I give it inspiration from another excellent chart I saw recently [Credit - Peter Gostev ], and asked it to create the chart in a similar format. This was the output.

Animated height chart comparing Dash and Pixel at the same age.

DASH VS PIXEL — GROWTH STORY

Height compared at the same age, not the same date

CHART

DATA

A picture paints a thousand words Whilst this test was just a relatively simple, fun Friday experiment, the step-up in visual reasoning feels like another major leap in the development of AI models, and one that opens up a world of opportunities for product builders. The old saying 'a picture paints a thousand words' takes on a whole additional layer of meaning in the AI-era.

Images, photos, videos (and increasingly 3D/spatial data) all provide so much more context and depth than mere language - you just need to look at a beautiful painting or a photo to appreciate that. And in a world where 'context' provides the fuel for AI models, these kinds of leaps in visual reasoning are going to exponentially increase the demand for, and usage of more context-rich modalities.

At Loomery, we’re already building products that take advantage of these capabilities. So if you’re thinking about how to leverage AI to build genuinely game-changing products, give us a call - we’d love to chat.

*As measured on the CharXiv Reasoning benchmark